Users, Usage, Usability, and Data

The day job (and a new podcast) have been getting the bulk of my time lately, but I’m way overdue to talk about data and quadrants.

If you need a bit of context or refresher on my stance, this post talks about my take on Brian Marick’s quadrants (used famously by Gregory and Crispin in their wonderful Agile Testing book); and I assert that the the left side of the quadrant is well suited for programmer ownership, and that the right side is suited for quality / test team ownership. I also assert that the right side knowledge can be obtained through data, and that one could gather what they need in production – from actual customer usage.

And that’s where I’ll try to pick up.

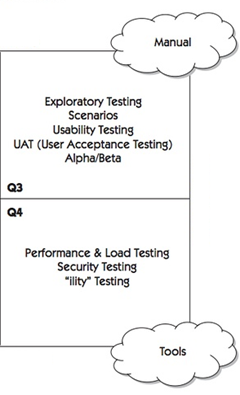

Agile Testing labels Q3 as “Manual”, and Q4 as “Tools”. This is (or can be) generally true, but I claim that it doesn’t have to be true. Yes, there are some synthetic tests you want to run locally to ensure performance, reliability, and other Q4 activities, but you can get more actionable data by examining data from customer usage. Your top-notch performance suite doesn’t matter if your biggest slowdown occurs on a combination of graphics card and bus speed that you don’t have in your test lab. Customers use software in ways we can’t imagine – and on a variety of configurations that are practically impossible to duplicate in labs. Similarly, stress suites are great – but knowing what crashes your customers are seeing, as well as the error paths they are hitting is far more valuable. Most other “ilities” can be detected from customer usage as well.

Evaluating Q3 from data is …interesting. The list of items in the graphic above is from Agile Testing, but note that Q3 is the quadrant labeled (using my labels) Customer Facing / Quality Product. You do Alpha and Beta testing in order to get customer feedback (which *is* data, of course), but beyond there, I need to make a bit larger leaps.

To avoid any immediate arguments, I’m not saying that exploratory testing can be replaced with data, or that exploratory testing is no longer needed. What I will say is that not even your very best exploratory tester can represent how a customer uses a product better than the actual customer.

So let’s move on to scenarios and, to some extent, usability testing. Let’s say that one of the features / scenarios of your product is “Users can use our client app to create a blog post, and post it to their blog”. The “traditional” way to validate this scenario is to either make a bunch of test cases (either written down in advance(yuck) or discovered through exploration) that create blog entries with different formatting and options, and then make sure it can post to whatever blog services are supported. We would also dissect the crap out of the scenario and ask a lot of questions about every word until all ambiguity is removed. There’s nothing inherently wrong with this approach, but I think we can do better.

Instead of the above, tweak your “testing” approach. Instead of asking, “Does this work?”, or “What would happen if…?”, ask “How will I know if the scenario was completed successfully?” For example, if you knew:

- How many people started creating a blog post in our client app?

- Of the above set, how many post successfully to their blog

- What blog providers do they post to

- What error paths are being hit?

- How long does posting to their blog take?

- What sort of internet connection do they have?

- How long does it take for the app to load?

- After they post, do they edit the blog immediately (is it WYSIWYG)?

- etc.

With the above, you can begin to infer a lot about how people use your application and discover outliers, answer questions; and perhaps, help you discover new questions you want to have answered. And to get an idea of whether they may have liked the experience, perhaps you could track things like:

- How often do people post to their blog from our client app?

- When they encounter an error path, what do they do? Try again? Exit? Uninstall?

- etc.

Of course, you can get subjective data as well via short surveys. These tend to annoy people, but used strategically and sparsely, can help you gauge the true customer experience. I know of at least one example at Microsoft where customers were asked to provide a star rating and feedback after using an application – over time, the team could use available data to accurately predict what star rating customers would give their experience. I believe that’s a model that can be reproduced frequently.

Does a data-prominent strategy work everywhere? Of course not. Does it replace the need for testing? Don’t even ask – of course not. Before taking too much of the above to heart, answer a few questions about your product. If your product is a web site or web service or anything else you can update (or roll back) as frequently as you want, of course you want to rely on data as much as possible for Q3 and Q4. But, even for “thick” apps that run on a device (computer, phone, toaster) that’s always connected, you should also consider how you can use data to answer questions typically asked by test cases.

But look – don’t go crazy. There are a number of products, where long tests (what I call Q3 and Q4 tests) can be replaced entirely by data. But don’t blindly decide that you no longer need people to write stress suites or do exploratory testing. If you can’t answer your important questions from analyzing data, by all means, use people with brains and skills to help you out. And even if you think you can get all your answers with data, use people as a safety net while you make the transition. It’s quite possible (probable?) to gather a bunch of data that isn’t actually the data you need, and then mis-analyze it and ship crap people don’t want – that’s not a trap you want to fall into.

Data is a powerful ally. How many times, as a tester, have you found an issue and had to convince someone it was something that needed to be fixed or customers would rebel? With data, rather than rely on your own interpretation of what customers want, you can make decisions based on what customers are actually doing. For me, that’s powerful, and a strong statement towards the future of software quality.

Excellent points, but what happened to business logic verification with a given build or changeset? I infer that you think either than this problem has been so solved that there’s nothing else to say about it, or you don’t care. Please clarify.

If we’re talking about the same thing, I’d hope those are covered by programmer tests (unit, checkin, functional, etc.).

Yes, thanks Alan, although most of these are what I’d call unit and regression tests. But what do I know, I look like an evil geek hexagon 😉

“over time, the team could use available data to accurately predict what star rating customers would give their experience” – but can the team predict the reasons why? How do you respond when the data collected clashes with the business requirement, or the overarching vision of the product?

The models they built for the prediction were based on subjective survey questions – which they can still use anytime they think the models they are using aren’t accurate anymore.

Even with the best data, you still need to get direct feedback. Initially, it may be from survey questions, but eventually – as your product gets more mature, you can also pull subjective data from social media (e.g. twitter, fb, forums, etc.).

I think what Mark is saying is that you need better information on the business behaviors of your SUT than analytics can give you, so functional E2E or regression testing is still important, live or not. You still need some code with an oracle (maybe a simplistic one) to verify that the correct behavior happens, independent of user agent and end-user behaviors. Analytics are great and powerful for what they’re good at, but you still need regression testing. My book MetaAutomation addresses a better way to do this (to publish this summer).

Is your book targeted at testers, developers, or both. Over time, I’ve come to realize that it’s generally much more efficient to have programmers write tests for regression and business logic than have a separate team do that work.

No, not always, but it’s an approach that should at least be considered.

That makes sense to me in to have programmers write regression and business logic tests in the sense that they have the skill and tech chops to make it happen in a fully-powered language (e.g. C#, Java, C++). OTOH, I’m a little conflicted in that I’ve seen e.g. unit tests written that did not necessarily allow for refactoring because they make assumptions about implementation that a tester would not make. Also, I wonder how the devs find the time to make a really complete regression, given how much time pressure they’re under anyway, but maybe you already have a solution to this problem.

I’m curious what you think of this: given that the devs are focused on getting the product to work and work well, IMO they’re not in the best position to ask questions about quality that a tester would ask.

My book is for testers, devs, leads, managers etc. basically all who write automation and all customers of the information that automation can create. Focus is on regression automation, but TDD and perf tests can benefit where that overlaps with the regression automation.

Developers are under pressure to deliver quality code – not just to get features done. They’re asking – and answering questions about functionality. Others (usually testers) ask and answer questions about usefulness, availability, etc.

>>”Agile Testing labels Q3 as “Tools”, and Q4 as “Manual”.”

Actually, Agile Testing labels Q4 as “Tools” and Q3 as “Manual.”

oops – transposition error. I’ll fix that.